- The Leap

- Posts

- Large Language Models

Large Language Models

Understanding AI — Part II: Intro to LLMs

Plinko

You’re probably familiar with Plinko, the game popularized on the TV game show, The Price is Right.

A contestant drops a disk down a peg board. The disk randomly bounces between the pegs before falling into a slot associated with a particular prize.

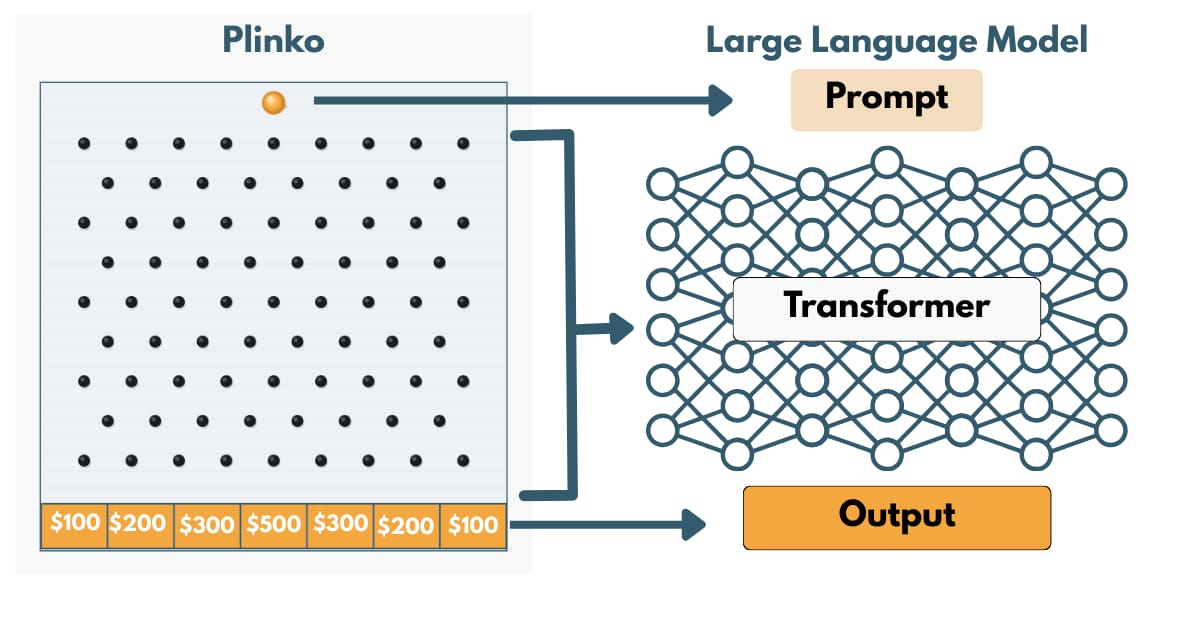

Oddly, this simple game is a good visual metaphor for how Large Language Models or LLMs work.

LLMs are the most common type of AI model and run popular AI chat bots such as ChatGPT.

Just as a Plinko player begins with the disk, when you use an LLM you start by typing a prompt. Once the chip is dropped in Plinko, it makes its way through the peg board while your prompt to an LLM is sent through a transformer.

Think of a transformer like a multi-layered peg board of mathematical equations with parameters instead of pegs. And unlike a Plinko board that might have a 100 hundred or so pegs, LLM transformers have billions of these digital pegs or parameters.

Finally, in Plinko the chip drops into one of the prize slots after randomly bouncing its way through the peg board.

Although there is a degree of randomness to how your prompt moves through a transformer, the digital pegs in an LLM are tuned in a way to guide your prompt to a particular answer.

Let’s dive in to the three parts of an LLM; the prompt, transformer and output to better understand how LLMs work.

The Prompt

In Plinko, everything begins with where you drop the chip.

But before the chip even touches the board, it has a shape and weight. That shape influences how it moves.

Your prompt works the same way.

When you type into an LLM, your sentence is not processed as a single idea. It is first broken into smaller pieces called tokens. Tokens are fragments of language transformed into numbers.

Sometimes they are full words. Sometimes they are parts of words. Sometimes they are only punctuation or even a space.

For example, the model does not see:

“Explain inflation clearly” as a complete phrase.

It sees something more like the phrase broken up as:

“Explain”

“ inflation”

“ clearly.”

Even small wording changes can alter the token sequence. Alter the token sequence and you change the probabilities for potential responses from the model.

That is why:

“Explain inflation.”

produces something different than:

“Describe inflation.”

The model is not interpreting meaning the way a human does. It is evaluating patterns in token sequences and predicting the appropriate response to those patterns.

Think of a good prompt, then, not as asking better philosophical questions. It is about constructing better token sequences for the model to process.

How does the model process your prompt and its tokens? Through its digital peg board known as a transformer.

Transformers

The “GPT” in ChatGPT stands for Generative Pre-Trained Transformer. Generative, in that it produces a response. Pre-Trained means that its billions of digital pegs have been tuned by vast amounts of training data including books, internet sites, images, audio, etc. The transformer is the prediction engine that uses those pre-trained weights to predict a response from your prompt.

As your prompt moves through layer after layer of the transformer’s digital pegs, the model predicts the next word or phrase in building its response.

At each peg, it’s choosing amongst a list of possible words or phrases. Each one is assigned a probability. Depending on how the model is tuned, it might not always pick the word or phrase with the highest probability. This is why the exact same prompt can produce different responses.

This level of randomness in AI models like ChatGPT is intentional and provide both benefits, costs, and result in unexpected outcomes.

Now that we understand how a transformer deconstructs a prompt and builds a response, let’s discuss the output further.

The Output

Eventually, the Plinko chip lands in a prize slot.

For an LLM, the “prize” is the output.

The randomness factor within the transformer makes the output appear more human, even creative.

It’s also one of the reasons outputs can be incorrect or make it appear the model is “hallucinating”.

Think of it as adjusting the “temperature” setting that changes how adventurous the model is allowed to be. Lower temperature makes it hug the most likely path. Higher temperature allows it to explore less probable routes.

This is why LLMs can feel creative and novel. It is also why they can be confidently wrong.

The chip always lands somewhere. The model always produces an answer.

But just as a Plinko board does not understand the value of the prize it lands on, an LLM does not understand the meaning of the words it generates.

It predicts. It assembles. It outputs.

That is the mechanism.

When you see the system this way, the mystery fades. What remains is a powerful probability engine that can be guided, constrained, and applied intentionally to produce very useful outputs.

The Next Step

Now you understand the basics.

A prompt is broken into tokens. Those tokens move through a transformer. An output is assembled one piece at a time.

Whether you’ve never used AI or use it often, the next time you write a prompt ask yourself:

Am I being precise, or vague?

What assumptions am I embedding in my wording?

What outcome am I steering the model toward?

Remember, just like in Plinko you are positioning the chip.

You should also ask:

What data was this model trained on?

Whose perspectives are under or overrepresented?

How might that shape the answer I receive?

AI models’ digital peg boards are built from patterns in existing human data. That means they reflect our knowledge, our biases, our blind spots, and our inconsistencies.

The model will always give you an answer.

It does not know if it is wrong.

It does not care if it is incomplete.

It does not feel the consequences of being mistaken.

You do. Which means responsibility does not disappear when you use AI. It increases.

Used passively, AI can mislead.

Used intentionally, it can amplify judgment, creativity, and leverage.

The LLM is like a brain, but a limited one. Next week we will begin to understand how LLMs are but a component within a larger digital organism.

If you want to understand LLMs a bit more in depth I can’t recommend the video below from 3Blue1Brown on YouTube highly enough. They do a fantastic job of illustrating the concepts in this article in an easy to understand way.

My goal with The Leap is to provide you each Saturday with the knowledge, tools and lessons learned to help you get started and keep going toward building your future.

Whether you are making the leap to startups, solo-entrepreneurship, freelancing, side hustles or other creative ventures, the tools and strategies to succeed in each are similar.